In this session, Irina Cismas, Head of Marketing at Custify, was joined by Henry Dillon and Fred Busche, Global Customer Success Managers at Maptek, to explore what it really takes to scale customer success in a complex, multi-product software business.

The conversation focused on the operational reality many CS teams face but rarely see reflected in standard advice: multiple products, mixed licensing models, regional differences, fragmented data, and customer knowledge that often lives inside individual relationships rather than shared systems. Together, they unpacked how to move from reactive, relationship-led customer management toward a more proactive and scalable CS model, without pretending complexity can be eliminated overnight.

Summary points

Complexity changes the CS playbook: Traditional SaaS advice breaks down in environments with multiple products, mixed hardware-software setups, hybrid licensing models, and diverse user personas. Each product and persona can require a different path to value.

Healthy customer relationships need more than usage data: At Maptek, customer health depends on combining communication quality, product usage, support signals, and account context. Strong relationships still matter, but they need to be supported by shared visibility.

The shift to CS was driven by business model change: Moving from long-term transactional and perpetual-license relationships toward subscription models forced the team to rethink how they deliver value, track health, and stay proactive.

Visibility was the first real upgrade: Custify helped consolidate scattered data into a clearer account-level view. That gave teams faster access to support, communication, and product signals without forcing them to search across multiple systems.

Data hygiene started with prioritization, not perfection: The team did not begin with clean data. They started by identifying the most useful records and signals first, then layered in CRM data, product telemetry, support history, and account notes over time.

More data did not mean better insight: Thousands of telemetry points created noise, not clarity. Real progress came when the team simplified their model and tied signals to user outcomes and business outcomes instead of tracking everything available.

Early automation did not always work: A risk mitigation workflow based on health score changes proved too complex too early. It generated too many tasks and exposed maturity gaps, but it also helped the team learn how dynamic their data really was.

Podcast transcript

Intro

Irina (0:03 – 1:47)

Hello everyone, welcome. I’m Irina Cismas, Head of Marketing at Custify, and I’ll be your host for the next hour. Today, we are talking about something that I think a lot of CS teams experience, but don’t often see it reflected in the advice they read.

Most customer success advice out there assumes a pretty clean setup. One product, pure SaaS ideally, a clear user journey, nice, tidy data. But what happens when that’s not your reality?

What happens when you have multiple products, each with its own technical debt? When some of your customers are on subscriptions, but others are still on legacy license? When the knowledge about what’s going on with an account, maybe lives in the head of one specialist who at some point decides to leave the company.

And of course, all the know-how lives with him. That’s the reality my guests deal with every day. And honestly, it’s a reality many CS teams will probably recognize.

I’m joined today by Henry Dillon and Fred Busche. They are both Global Customer Success Managers at Maptek, a 40-plus-year-old mining technology company that builds software and hardware for the mining industry. Henry, Fred, thank you for taking the time to join us today.

Really happy to have you both here.

Henry (1:47 – 1:48)

Thanks for having us.

Irina (1:50 – 4:13)

In the next hour, we are going to dig into what it looks like when CS scales in this kind of environment. We’ll talk about where the old relationship-led model hits its limit, what changes when you try to formalize it, where tooling helps and where it doesn’t, and what real progress looks like when maybe it’s far from perfect. And that’s because we see every day, and that’s something that we see it every day at Custify, because the complexity isn’t always about the number of customers.

Sometimes it’s about what’s behind each customer, the product they use, the history, the technical depth. That’s when visibility starts to break. And that’s exactly where a platform like Castify can help, not to replace the expertise, but to make it sure it doesn’t stay locked inside one person’s head.

Before we start the discussion, a few housekeeping notes. The session is being recorded. I know that this is the number one question I get, when and if I’ll get it recorded, for sure you will.

And please don’t be shy, ask your questions. Both Fred and Henry are here to answer the questions. I’ll make sure I monitor the chat.

We’ll try to pick the questions. If they are relevant in the context of the conversation, if not, we’ll save some time for the Q&A at the end. And now, because we also have a few people joining us live, I wanna take a pause from everyone.

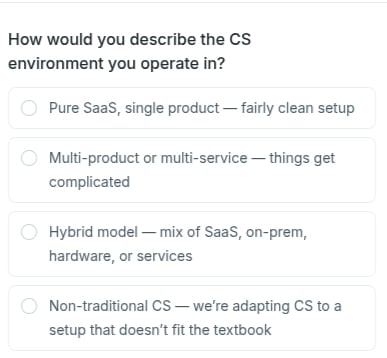

And I wanna ask you, how would you describe the CS environment that you guys are operating in? So give me a chance to publish the poll.

Henry (4:13 – 4:15)

Yep. Okay, perfect.

Irina (4:16 – 5:42)

Okay, perfect. I hope everyone can see it. Let’s give it a few minutes for everyone to share and express their voting.

Okay. It was, people were shy at the beginning, but we have, okay. We have hybrid, we have non-traditional, we have multi-product, don’t be shy, don’t be shy.

Okay, a few more minutes. Okay, how things are going? Non-traditional, of course.

Well, so while you guys are voting, it looks like you are living in that messy middle, which is perfect, because that’s exactly what today is about. No keen playbooks, only decision in complex environments, because that’s the reality that we live in. And I suggest we get started.

And I’ll start with you, Henry, if you don’t mind. And I’m gonna ask you, why does CS feel more complicated when you are operating in a mix of on-prem hybrid setups, hardware plus software? What are the things that simply don’t work the way CS books suggest?

Why customer success gets harder in multi-product environments

Henry (5:44 – 7:17)

I think we’ve done a fair bit of thinking about this. The biggest difference is that the different license types and product types—including hardware and software—have very different user journeys and value points. Because of that, traditional playbooks designed for single-product SaaS environments, as you mentioned earlier, make it difficult to create a boilerplate approach that works for everything.

That’s one of the biggest differences for us: you have to think about each product individually. Even within that, our flagship product covers multiple personas, and those personas are quite different. They fulfill different functions within mining or technical environments, and they often interact only at the beginning before their paths diverge. Designing those different pathways and understanding what’s happening across them is one of our challenges, and it’s something we’re using tools like Custify to help solve.

Fred might have a comment on that one as well.

Fred (7:18 – 8:22)

Just to build on what you’re saying, Henry, to give people an idea: we have three flagship products, and they each have very different histories in terms of how long they’ve been on the market. That means different technologies are in play, different interfaces, and on top of that we have around a dozen scaling products that serve a wide range of personas, each with a different value proposition.

So trying to understand health and risk—or even manage a customer through a single contact point—is extremely difficult. Different user groups are using different products, and they may have different levels of satisfaction depending on their persona, the product they use, and their level of training.

So, it can be quite complicated.

What a healthy customer relationship actually looks like

Irina (8:23 – 8:40)

Speaking of this, because you mentioned different setups, different personas, different type of flagship products, I’m curious, what does a healthy customer relationship actually mean for you, Fred, in your line of business? How do you define success?

Fred (8:42 – 9:42)

That’s something we’ve thought a lot about, as Henry mentioned earlier. When we created the health score, we tried to bring in different elements—communication, product usage, and support—to capture the different dimensions of how we interact with the customer and get an accurate reflection of health.

But if you strip all that back, the most important thing is having good communication with the customer. You want multiple points of contact where you feel there’s a strong relationship. And you also need to understand the challenges they’re facing and make sure you’re making progress toward solving those challenges.

The signal that the old CS model had reached its limit

Irina (9:45 – 10:32)

You both came from environments where CS is very relationship driven and your teams knew their customers well and had a lot of expertise, that’s for sure. And for a long period of time, I mentioned that you are a 40 plus company, so you do have a long lasting tradition. This approach worked, but eventually something had to change.

What were the first signals that told you that the old way of doing CS was no longer enough and you had to change something? Henry, do you want to let us more about this?

Henry (10:33 – 13:32)

Yeah, well, CS is new to us. As you mentioned, our company is very technical. Fred, his background is in mine engineering and mine planning.

He worked on mine sites. He works in consulting, delivering technical work to customers. Myself, I’m the same.

I’m a geologist and I’m very technical. I understand all the tools. I’ve used them for my whole career and have grown up with them like a lot of our customers have.

But what changed for us was actually the technology industry itself, I think is the biggest comment to make. Moving from where we were when we first released our products in 1981, where we were a transactional sale and support and reactional. Those relationships were built because the products had longevity within an organization.

They made an investment in that, a long-term investment in that product. And so getting them working and then supporting them was where the relationship were constructed. A change into more of a subscription model with the rest of the technology industry has meant we then have to look at that differently.

And we still have customers that are operating across that hybrid. We don’t have customers that are still on perpetual and separate customers that are on subscription products. We have customers that do both.

And that in itself has its challenges around how we support those customers and ensure that they’re getting value. So I don’t think it was as much as, it was more of a business-driven process where, or industry-driven process really, where we have to adapt to what we were doing in order to continue supporting our customers. Yeah, so then that was probably the biggest thing that changed.

Relationships are still great. You’ve said, we were already doing that relationship management. I think the challenge for us now is moving from more of that reactive relationship management and support into being more proactive, being able to be in front of the customer, looking further ahead of us.

And I think that’s what the tooling that we’ve been setting up and helping us do, give us more of that visibility on what’s actually happening within the organizations and helping us work out what some of those specific pathways are within the products environment that’s delivering value to the customers.

Moving from reactive support to intentional customer success

Irina (13:35 – 14:12)

Let’s go into concrete things. Let us know how you guys actually, I would say upgraded or moved to the next level in terms of customer success with the setup. A setup that you said it was a business-driven decision.

So something changed in the business and basically the CS organization was forced to adapt to this part. How did you actually moved from the old setup to the new one?

Fred (14:14 – 16:19)

I think we still are—that’s part of the challenge. But we’ve had tremendous growth this year with our internal team and the use of the tool. We now have about three times as many people using the platform as we did in January 2025.

Speaking to Henry’s point, we’ve always had people doing great customer success activities, but it was more in a reactive state. Custify has given us a lot of visibility into what’s happening at the account level—from a support perspective, a communication perspective, and a product usage perspective—so it creates a much clearer picture.

Some of the communication tools in the platform were also an easy win early on. Getting our internal teams on board was easier once they realized they could do simple things like send an email directly from the customer’s record in Custify.

The business change wasn’t about starting to do customer success—it was about making customer success more intentional and building it into the processes. Custify really helps with that. We’re certainly not masters of automation, but we’ve put a lot of work into building simple automations that outline the process from pre-sales to post-sales.

Henry, I don’t know if there’s anything you want to add to that.

Henry (16:20 – 18:07)

I think the thing that drove most of that growth was the visibility of the data. Now, that’s not saying the data wasn’t visible before. There were a couple of elements that weren’t as visible as they are now, but it was still possible to access the data. You just had to look in many different places to find it.

What Custify allowed us to do was bring a clearer 360 view of what the customer was actually doing and give that information upfront to the people who needed it. That drove large growth in platform usage and helped us move toward becoming much more proactive in our engagements.

What sat behind that, technically, was a lot of data management work. We had a colleague who spent a lot of time working with us on data management and data flows to push the information we needed into Custify. From a tactical perspective, that was probably the biggest component that moved us forward.

Custify is a great platform for visualizing that information, but there was a lot of work behind the scenes to deliver that data and get us to that point.

Sorting through messy data and deciding what actually matters

Irina (18:08 – 18:45)

When you say data management, can you be more specific? What data points were you trying to bring into, and how did you guys do the data hygiene process what would it imply from your perspective? Because I know that the data is something that I’m hearing a lot, we don’t have the data, there’s no clean data, we have it in between, how did you sort it out?

Henry (18:47 – 22:11)

Yeah, I think there have been a few patterns in that, and we’ll be totally honest—we don’t have clean data. There’s still a lot of work to be done. We’re a nearly four-and-a-half-decade-old company that’s been collecting data for that entire time.

The first step was deciding what parts we actually wanted to bring through. You asked about data points—so the first things were contact records for users and company records. We had to decide which ones we wanted and bring them through from our CRM. After that, we started looking at product telemetry: what does product usage actually look like, license engagement, feature engagement.

Again, that’s been really complicated. Some of our products are multiple decades old and weren’t originally designed to capture that kind of information because it simply wasn’t something people were doing at the time. Over the years there have been many additions and changes, which created a huge amount of noise in the data.

One of the key things we focused on was deciding how to simplify it, because you can’t look at everything. A lot of that noise doesn’t actually give you a clear signal. So we started by asking, what are the things we want to look at? But when we examined them, we realized they weren’t always telling us what we expected. That meant going back and redefining what we should actually track.

Product engagement, support cases, and account notes from the people interacting with customers are critically important. Having a clear view of those conversations across the business helps a lot. In the old way of working—and you mentioned this earlier, Irina—a lot of that knowledge lived in people’s heads because they were the ones engaging directly with the customer. But in a true CS model, we need multi-functional engagement with customers at different levels.

That means all of that information, all those data points, need to feed into the system so everyone has a shared view of the customer.

Fred (22:12 – 23:44)

Yeah, I’ll just add a couple of other points about the data. I liked what you said about signal versus noise, because we’ve definitely listened to a lot of noise that we thought was going to be signal. You talked mostly about user-generated data, but we also have revenue data and license entitlement information from our contracts. That data is also very important from our outlook and process perspective.

One of the first mistakes we made was that it was very easy in Custify to generate even more metric-type data from what we already had. We thought it would be interesting to look at things like X divided by Y or whatever metrics we could come up with. Then we realized we had too many—we probably didn’t need that many.

So one takeaway is that we have thousands of telemetry keys, and it’s very easy to get overwhelmed with data. At the beginning, it’s hard to know what will actually be useful. That experience has pushed us to approach problems in the simplest possible terms, because we know it will probably become more complicated anyway.

Henry (23:45 – 25:05)

So we went back to the drawing board a bit. Fred mentioned that we have thousands of telemetry keys, but not every one of those actually leads to a customer outcome, a user outcome, or a business outcome.

We had to go back and ask: which ones actually matter? Is it a single item, or is it a group of actions that together deliver the outcome? Often, multiple things need to happen before the outcome is achieved. Simplifying the data in that way helped us get the visibility we needed to understand what was actually happening.

The next phase for us—and we’ve been talking about this recently—came from an idea our colleague Kono suggested. It’s about separating user outcomes from business outcomes. In other words, what the user needs to do during their operational day versus what business value comes from that activity. Creating visibility around those distinctions is probably the next step for us.

Process redesign, automation attempts, and what failed first

Irina (25:06 – 25:34)

So you went through, we want to track everything and let’s bring as much data, all the data that we can into, hold on, it might be too much, so let’s strip it down. Let’s start with the basic. And if I got it correct, you also tried to map it with the customer outcomes and the user outcomes.

Did I got it right?

Fred (25:36 – 25:38)

I think that’s pretty much right. Yeah.

Irina (25:39 – 26:52)

No, okay. Now, this data points, the data management and trying to gather as much data points into one system, basically helps you into where it’s in strong correlation also with your business processes and flows. And now I’m thinking, what were those processes and flows that you had to rethink in order to adapt to the new reality?

How were you doing things in the past, were prior and now? You mentioned some of the, you said something at the beginning of our discussions about we started automating a set of things, but what were those concrete things that you started automating were the processes that you started mapping? Not sure, it’s either maybe you wanna take this Fred or Henry, I’ll let them to decide, or maybe you share.

Fred (26:53 – 27:01)

I’ve got something in mind. Maybe Henry can think of a success because I’m gonna start with a failure.

Irina (27:02 – 27:10)

Please do, please do. Let’s talk about the uncomfortable things because it’s not always easy.

Fred (27:11 – 29:17)

Just in the context of our discussion, one thing we were eager to automate was a risk mitigation workflow. The idea was that if a customer moved from green to red, or from red to yellow in the health score, it would trigger certain actions.

Technically, we achieved it, but at the time it was too overwhelming. We weren’t as mature as we are now, and we haven’t been able to revisit it fully yet. What may end up replacing it is the AI risk sentiment tools in Custify, which we’re currently testing. We’ve been running them, but we haven’t had a chance to thoroughly evaluate the results yet—things like benchmarks, how often the signals were correct, and examples of where they were right or wrong.

One of our early automations was a fairly complex playbook that triggered different follow-up tasks for people. But we just weren’t ready for it, and the result was a lot of undone or incomplete tasks.

Sometimes you see ideas like this in CS SaaS content and think, “That’s a great idea.” But in the real world, it can be more challenging than just setting it up and hitting go. So that’s something we need to revisit and rethink.

That said, we do have quite a few automations that have been unexpectedly successful. I’ll pass it over to Henry to add to that.

Henry (29:19 – 32:32)

I think the key point in the risk management playbooks that we’ve created was maybe, it generated a learning for us. And that learning was that our data is quite dynamic when it comes down to the user information that we’ve got or that we get. It also helps us understand customer usage a lot more because we saw that within shorter timeframes, there’s more variability in those scores than there are over the longer timeframe.

So it gave us an opportunity to take a step back a little bit and say, what happens if we average this data over a longer time and use those as our signals because changes in daily or weekly usage might not actually be affecting the overall satisfaction of the product with the users. So I think that was one of the, I think that in itself was a success in that it generated a new learning for us. If we hadn’t gone down that path, we might not have got that extra level of insight into what was happening.

And I think that’s definitely been one of the leading criteria for us as we’ve gone on in testing. So early on, we were very analytical, we were very maybe academic in what we were trying to achieve and the way we were thinking about things. And we actually just needed to put some things in place even with the noise that we had and then work through what that led us to now.

I think strategically, one of the other components that we have going on is Maptek has initially operated with five fairly independent regions and what’s Custify, we have rolled Custify out across all of those regions within a global perspective. So Brett, Kono and myself managed that application and thought within it. So what we’re also starting to do is centralize activities, centralize visibility and those processes.

And another success I think that’s working quite well at the moment is we introduced onboarding life cycles. Onboarding was already being done. We’re not saying that we introduced something completely new.

We tried to build a framework around what was happening within each of the regions and give them the autonomy to still continue with what they were doing but also give visibility within the system as to what was actually happening within those. So that’s one of the more strategic elements that sits over the top of that. Trying to centralize visibility and activity to have everybody kind of working in the same way.

Scaling CS across five regions with different realities

Fred (32:34 – 33:33)

Sorry, just to add one more thing. One surprising automation that became very popular internally was something we built quite casually while experimenting with the functionality.

We created a playbook that sends Slack messages to different regional channels whenever there are new signups to our online licensing platform. At the time, we were really just testing the Slack integration, and it was low stakes, so it was a good experiment. But people ended up really liking it.

Sometimes you don’t know whether something you build will be useful or not, so you have to experiment a bit.

Irina (33:34 – 33:59)

I’m curious, and I think you mentioned before Henry, that there are five different regions that you guys are covering. Are there any differences in terms of the way CS is done in different regions? Are there different things?

Henry (34:00 – 35:58)

Yeah, very much so. Very much so. Cultural differences, even in the way business is done in general.

We have operations in Europe and the European office covers a huge number of, I forget the exact number of countries that they cover but it’s multi-language support there. We have African operations. So Africa and Europe work together.

They’re in the same time zone, but they cover a very, very large geographic and cultural area. South America, language is one for us. Our colleague Conor is native South American, speaks Spanish, Fred and I are both learning Spanish in order to be able to support that a little bit more.

And their business is quite different. Then North America and Asia Pacific. Again, Asia Pacific covers quite a large number of countries and varying customers as well.

So yeah, the way CS is done, the way the relationships have been built over time, some regions have moved faster to applying the things that we’ve asked or some maybe haven’t been able to or multiple different reasons. And so working within that environment from our perspective makes it a challenge as well. But also it’s really great to see how these things do get applied and see them moving forward as well.

It’s nice we have multiple studies to be able to show other regions what’s working well and what would not, it’s quite nice.

Fred (35:59 – 36:40)

Yeah, I’ll just say that in North America, the US and Canada is split into one region and then Mexico, Central America and the Caribbean is its own region. But yeah, as Henry stated, there’s different levels of maturity across the regions, of course. And so it’s just another kind of thing that we have to work with and we get to work with, but all of our people are great.

So that part’s easy. It’s just trying to take something from one region and trying to adopt it to another or even trying to adopt it across the globe.

What had to be true before the tooling could help

Irina (36:45 – 37:46)

You mentioned how Custify, where a tool like Custify helps you support the CS operations. I wanna ask you, what was the prep needed before you implemented the tool? What did you guys needed to sort internally so that when you decided to adopt a tool, it was to make sure that you took the right decision and it was the right time for you.

That’s one thing when it comes to tooling. And I also wanna invite you to share what’s the part that the tool cannot solve for the whole audience, because a tool in itself, it’s not the solution on its own. And I wanna understand how you guys prepare and what’s the part that couldn’t solve for you.

Fred (37:49 – 40:11)

So I think really anytime that we approach anything that we’re gonna implement, any type of process, we try and talk to all the regional teams to understand how they’re doing it right now. One thing we were able to consolidate under Custify that has worked really well for us is both CSAT and NPS surveying. We were using Desperate Tools across the regions before.

So, yeah, I mean, just working with the teams to understand what their processes were before and make sure that the new process is not gonna leave any gaps. And so, yeah, I mean, that’s kind of a standard, standard operating procedure when we go to really implement anything is like, okay, does this work for everybody? And I guess, yeah, I mean, where the tools sometimes fall down is like maybe some of those idiosyncratic things that are hard to capture, that people inherently know about how they do business in their region.

I guess that’s maybe shifting a little bit to like health score. When we first implemented health score, we asked the team to like really be critical of the score, to scrutinize it and make sure that we have the right thing. And even today, sometimes they’ll say like, oh, this health score is showing this and I don’t think that’s right.

But yeah, I mean, that’s one place where you’re probably never gonna be able to capture everything that really goes into health and risk with a customer. And maybe we’ll get a little bit closer with some of the language processing technology that’s available today, but you still have to figure out a way to then capture conversations and then bring them into usable data. So I do think it kind of cycles back to data, like your processes with the tools are always only gonna be as good as your data is.

So that’s something else that you really need to consider. Before trying to take anything on.

Henry (40:12 – 41:38)

I think the other element is, it justifies really powerful giving some insights across cohorts and segments of the companies and individual visibility of other accounts and then being able to act on that, on that information. So it’s a great platform for actioning activities. What we do do outside is more of the overall insights.

A lot of our data lives in a data warehouse. We utilize all the business information systems to be able to look at that information in maybe more detail than we’re able to do within Custify and then use those insights to drive activity within the platform. So I think that’s probably from a more strategic perspective, the difference.

We do need to have another tool. We’ll do that business information layer for big picture insights rather than the more specific actionable insights that we can get within Custify.

How the team learned to trust the data

Irina (41:41 – 42:09)

Having data and trusting data, there are two different things. And I think Fred, you picked it up in the previous answer that you gave me when you mentioned something about health scoring. I’m curious, how long did it take before people trusted the data enough to actually use it in the decision process, not just look at it?

Fred (42:11 – 43:35)

Yeah, I think there are probably some people who still don’t exactly trust it, but it has to do with the familiarity and maybe the time in the tools or how mature their usage level of Custify is. Because it’s our instance, they start to get comfortable over time, probably just over a couple of weeks, but it did take us months. And we encouraged feedback and we certainly got people messaging us saying like, hey, this is my customer, this is the score, I don’t think this is right, why is this the score?

We answered so many why is this the score questions that we actually, we built a tab. When you guys changed to having the custom layouts, we created a tab that has every component of our health score on one page so that you can go to that page on the customer record and you can look at all the scores there and you can try and understand like, oh, okay, I see, it’s gone down because this specific score has gone down and that’s 5% of the score or what have you. So yeah, it helps obviously the better that you can display that, especially with, we probably created an overly complex health score, but the better you can explain yourself, I think the more people can trust it.

Irina (43:36 – 44:10)

Speaking of this, why did you have to change internally to get there, to get people to trust the data? Was it the expectations, the process, the constant internal communication, the visibility of the definition? What did you guys do internally to make the people trust the data or to start getting less questions about why that health score because my customer is perfectly okay?

Henry (44:11 – 46:28)

I think it was making that visible and definitely constant communication, reinforcing the state, the position that we’re at. Like Fred said, making it more visible as to actually how those health scores are created. We operate across disciplines, that’s why we hold.

And so I look at geoscience, Fred looks at my engineering, our colleague looks after our geomatics and surveying and geotechnical area. And we have separate health schools for each of those disciplines that then feed into a global health score. And that adds a lot of complexity.

There’s also health schools for the individual products that are used within those disciplines that feed into it. So it is quite a complex health scoring system. So making that visible, more visible than it was previously has definitely made a difference.

I think that seems to be one of the things that people have, that has led people to be more trusting of it. There’s still a lot that we can do to improve those. And so it’s that constant, continuous improvements as well.

It’s very rare that we pull something together and then we’re not changing it or adapting it again within the next six months in order to help with that adjustment, that trust of the data. Internally, I think starting to bring CS roles into the regions where we haven’t had those before and actually starting to make people responsible is another component of that as well. So although we had had, we talked about people doing those roles, they were technical people.

And I think that’s been part of it. Actually creating a CS organization within the business is another element that we have to do in order to move forward.

Fred (46:30 – 47:44)

Yeah, I’ll just add that it’s been a lot of, and Henry pretty much said this, but it’s been a lot of internal marketing and communication. We did for a while in our quarterly reports, we were to the business that gets sent out to everybody. We were including a table.

We would ask for submissions from the regional people, who they were working with. So we would put blurbs about what was going on and then we would also include a table that had all the health, some of the different metrics, including global health score for the customers that were mentioned. And so people could at least try and read the data context of the story and connect the dots to the scores.

So I don’t know if that actually helped or not, but I like to think that it did. And yeah, it’s been a learning process for all of us because like I said, Maptek’s been doing a lot of the activities maybe over the timeframe. It’s just in a different way, very reactive.

So at first, I think some of the people felt like, okay, I have the same job, just it’s a different title. So kind of trying to move forward and into the future, with a new way of doing things.

Henry (47:45 – 48:37)

Trying to press that proactive nature. And when we say we’ve done that relationship work, that relationship work was very much within individuals and with certain accounts. It wasn’t necessarily with everything.

So I didn’t ask another component that maybe we didn’t touch on earlier on. What’s CS processes that we’re putting in place now, the centralizing, the tooling allows us to do is being a lot more scalable than we were previously. They were made more one-to-one, and now we’re trying to be able to create more of that one-to-many, help people manage more accounts than that, or work with more accounts than work, and be proactive in that nature.

So that’s the other. Yeah, Robin.

When the work finally started to feel real

Irina (48:38 – 49:23)

I wanna stay on this thought, Henry, because I think we talked a lot in this hour about the things that were hard, that were difficult, that were uncommon. And since we are approaching the end of our discussion, I wanna end it in a positive thing. So I wanna ask you guys, when did it start to feel like it was actually working and all those discussions and effort and everything?

You started to see the light at the end of the tunnel. When did it feel like, okay, we are on the right path?

Henry (49:27 – 50:47)

I think, to be honest, it took a while. So there’s a lot of work to be done. And I would say the first year, probably, felt like we were doing a lot without seeing anything move very much, but then we started to really pick up.

Yeah, so it’s not an instantaneous, not for us anyway, not with the data issues and the complexity of our business. It took us at least a year to start the steam movement. And a lot of conversations and a lot of support from our amazing CSM and Custify to the words to help us get there.

Absolutely. You guys have been really supportive and really helped us, not just in the platform, but as we said, Brett and I and Kona, we’re all technical people. We are learning customer success as we go.

So that support from an actual CS role has been really, really great for us. Not only do you provide great tools, but you also provide great advice.

Fred (50:50 – 51:33)

Yeah, I would agree for sure on that point. And I would also just say, I feel like when people started to kind of request certain things, or they started to get ideas about how they would wanna use the tool. And even though sometimes it was frustrating because maybe it was in the opposite direction of what we were trying to achieve between the three of us, or even what the business goals are, like people started to at least see the tool and understand some of the potential.

So they would say like, hey, I wanna make this template or, hey, I’d love to make this playbook. So I guess when they started to get excited about the tool in that way, that felt good.

Advice for CSMs working in the messy middle

Irina (51:35 – 52:22)

Last question, at least from my side, and I know that we have a few also from the audience. I know a lot of people watching right now are in the middle of what you guys were describing. And I wanna ask you, for those that, for those CSMs who are not sure if what they are doing is working or not, what should they pay attention to instead for those big wins or instant, I don’t know, gratification moments?

What’s your advice for them? Pay attention to what? Because this is an indicator that, okay, you are moving in the right direction.

Fred (52:23 – 52:49)

I think for people who are actually out there, CSMs doing the work with the customers, you have to understand what their objectives are, document it, and just work on achieving that. If you do that and nothing else, then it doesn’t matter what kind of systems you have or what else you’re doing, that’s the number one thing.

Irina (52:53 – 53:11)

I’ll try to, I’ll address the questions that we have on chat. So looking back onto your Maptek CS department growth in the past two or three years, what would you have done differently? Sergey is asking.

Henry (53:20 – 54:30)

It’s a really hard question to answer. There are so many avenues we could have gone down that might have worked differently. Biggest thing I think maybe would have been a better understanding of our data before we embarked in the process.

The learning we’ve gone through, the data engineering we’ve needed to do, not just, it needed to happen. So it was, we were doing it anyway, but I think that the learnings that we’ve gone down to do that, I think maybe would have sped us up if we had focused on that before embarking on delivering it to the users. We’ve kind of, yeah, I think in some cases it’s made it more difficult than it seems.

That’s what we’re doing because the fluctuations in what’s happening with the data.

Fred (54:33 – 54:41)

I think we don’t have, Henry and Kono and I, we don’t have unlimited resources.

Irina (54:45 – 54:51)

Fred, I think I’m losing you. Can you speak closer to the mic? Oh yeah, can you hear me now?

Fred (54:51 – 55:54)

I’m speaking louder. Okay. I think if I could go back in time, like probably trying to think more intentionally about like a phased build out.

Maybe we tried to take on too much or too big, we were thinking big, so we were excited about that. But just trying to start with something very simple and small, implement that and then build from that. That’s probably, I think for me, the biggest takeaway.

I think where we’ve got to, it’s been fine. It’s been, we wouldn’t know that if we hadn’t done what we did. So, but yeah, I think if I could go back in time, that’s what I would try and really understand how we can build this out in phase and just build simple building blocks so that we could bring along everybody.

Cause not everybody wants to come at the same speed as the people who are trying to build out an organization or lead. So that’s, I guess, my reflection.

Irina (55:56 – 56:05)

And one more question. Did you use the same metrics or criteria for all customers or different outcomes for different years?

Henry (56:10 – 57:09)

Yeah, we don’t have all customers working with the same product. So we have had to do that. Each product, each persona within that product has its own set of outcomes.

So we have had to separate those and identify them and generate the goals, the health goals, some of the processes around those. And we talked a little bit about the onboarding. We’re trying to put an overarching onboarding process in place, but it leaves space within it for those specific to be managed in the context of what the customer is trying to achieve.

But still monitors the onboarding process itself or gives us visibility of where organizations are within that. So yeah, we have had to spend quite a lot of time designing some of that and implementing it.

Fred (57:11 – 58:12)

Yeah, not necessarily specific to outcomes, but we have spent a great deal of time trying to understand segmentation in different ways and implement that. And I’ll just say, you know, because I’m sure some people are interested that, you know, it’s a challenge. And you probably are going to end up back like for us, we kind of didn’t really want to focus on revenue, but it seemed like we always ended up back in that space because it’s difficult to not.

So like, you know, it’s going to be hard, but just keep trying, I guess. I think, you know, it’s extremely important to understand, you know, how, especially if you don’t have a lot of money, you don’t have a ton of resources, how you can, you know, have those relationships that are, you know, meaningful and not also stretch your people too thin to the point where they’re, you know, overloaded and burned out. So it’s critical.

Irina (58:14 – 59:49)

All right, we are going to wrap it up here. Henry, Friend, thank you both so much for being so open today. You didn’t give us the polished version.

You gave us the real one. What was hard? What was slow?

What surprised you? And what’s still in progress? And I think that’s exactly what people came here for.

And I really appreciate this part. For everyone watching, what Henry and Fred described resonated with you, that feeling of customer knowledge, living in people’s heads, of not having a shared view of what’s happening across accounts or maybe needing to move from gut feel to something more structured. That’s exactly the kind of challenge Custify was built for.

We, as you guys also mentioned, we help CS team centralize their customer data, build health scores. And we talked about those health scores that actually reflect the reality and create the visibility. You guys need to switch from a reactive to proactive approach.

If that sounds like where you are, we’d love to show you what’s been possible. We’ll send the recording shortly. We’ll be back with our next webinar in April.

More on that soon. Until then, take care. And thanks again for spending the hour with us.

Have a good one. Thank you.